05 – Data and Data Analysis Method: The Big Data Approach

Hello everybody!

I hope you spent nice holidays and you came back to work cheerful and full of energy!

Today, as promised, I’m going to explain a bit more about the data we collect and about the type of analysis we intend to perform. As I previously explained, the objective of the project is: to assess the relationship between truck fleet fuel consumption and road pavement conditions.

Data about the fuel usage are collected by Microlise Ltd whilst data about road pavement conditions are collected by TRL Ltd (but owned by Highways England). In both cases, data are stored in very big databases which can be remotely queried whenever needed.

This study is going to consider only data about trucks travelling along the English SRN (Strategic Road Network). The SRN is completely managed by the Highways England authority. It is around 7’000 kilometers long and is mostly made of motorways and ‘A’ roads. Although the SRN network represents just 2% of the length of all roads in England, it carries 1/3 of the entire traffic. Furthermore, 2/3 of all heavy-duty vehicles travel on the SRN. Considering the length of the SRN network, its traffic composition and the amount of trucks composing the fleet controlled by Microlise, considering just the SRN seems a reasonable choice for the data analysis.

In the first instance, only “few” data are considered to set up the data analysis method. More precisely, only data from the last three months, from one Microlise client and from only one road are considered.

Following the SAE (Society of Automotive Engineers) J1939 standard, Microlise collects, processes and stores data for its customers. Data are collected by black boxes (sort of on-board computers) that Microlise produces and installs black boxes on its customers’ vehicles. For example, data such as the instantaneous geographical position, the vehicle speed, the used gear, the driving direction, some engine data (e.g. torque, rotational speed, temperature, etc.), among many other parameters are recorded.

Each black box can record different parameters based on the customer request. Data are instantaneously recorded every time an “event” happens. It can be due to a technical problem of the vehicle, the driver braking, the vehicle stopping, etc. In any case, if no event happens in the meantime, the default is set to record data few minutes or couple of miles after the previous event.

Data are then stored in databases which can be remotely accessed any time. Because of dimension and safety reasons, data are stored remotely and many backups are available. Data are stored locally only when Microlise (or its partners) needs to analyze or process them. It is possible to apply filters and conditions while querying the database in order to extract just data we are interested in.

In order to understand the quantity of data we are dealing with, just imagine that the fleet currently controlled by Microlise in the UK is composed of something like 60’000 vehicles and that billions of kilometers have been traveled by the entire fleet last year. Each customer have hundreds of gigabytes of data per year stored in Microlise databases.

It's a huge amount of data and the fact of handling different data formats further complicates the situation.

But crew, no worries at all, I’ll find out a solution!

Actually, I already have some ideas and I’m already working on data, but as usual any suggestion is welcome!

First of all, let’s introduce my workmate: my “little monster”. It is the computer I use for work. It is quite a powerful machine with 32 GB of RAM and an 8-core processor with up to 3.5GHz clock frequency.

Yes, maybe it is not the best computer available on the market but it is not bad either, right?

But let’s introduce my idea for data analysis:

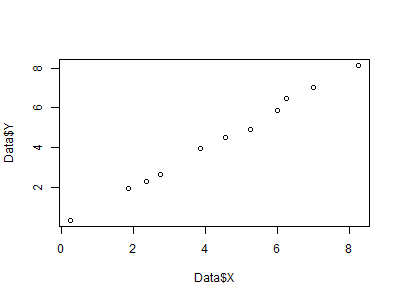

Based on the amount of data to handle and the discrepancies in data format, a “Big Data” approach appears to be compulsory. As mentioned before, I will use the computer programming language “R” to analyze the data (have a look here if you haven’t read my previous article).

Actually, R may not look the right software to use with “Big Data”, given that it store variables in RAM, but I’m quite confident in my “little monster” capabilities. It is a 64-bit OS machine with powerful processor, lots of RAM and, just in case, sampling of data and storing objects in hard disk can be two possible solutions for reducing the required computational power (have a look here for more information about handling “Big Data” using R).

In spite of the issue I mentioned above, R has many other qualities. It is specifically designed to perform statistical analysis, it is light but powerful software and least but not last, it is able to deal with different data formats easily and quickly too.

- “readxl”, for read and write Microsoft® Excel files,

- “RODBC”, which contains functions able to handle Microsoft® SQL databases and

- “rgdal”, which is the library containing functions used for dealing with shapefiles.

“R” usually extracts data and it can store them in sort of “standard” database variables called data frames.

Initially, the script considers data about fuel usage and compares consecutive events (of the same vehicle) to calculate the average fuel consumption as the ratio of the difference of used fuel between the starting and the ending points (events) to the traveled distance.

Some filters are applied in order to consider the calculated fuel consumption reliable:

1) events are consecutive and appertaining to the same vehicle;

2) the difference in speed between the two events is lower than 2.5km/h;

3) the difference between the average speed (calculated as the ratio of traveled distance to travelling time) and the average of the starting and ending speeds is lower than 2.5km/h;

4) traveled distance is higher than 500 meters;

5) traveled time is lower than 120 seconds;

5) traveled time is lower than 120 seconds;

These filters are set in order to ascertain that the trucks have been driven at (almost) constant speed along the road segment, driving for a sufficiently large distance.

A new data frame, named “Data.T” is created when the five conditions above are matched. It contains a record for each travel performed by each truck of the fleet between each couple of its events. Each record refers, basically, to specific part of the travel of a specific truck and it contains data about the plate number, the wheel configuration, the size of the engine, the euro type of the engine, the average speed, the fuel consumption, the starting and ending positions, among many others useful parameters. For instance, considering just one customer truck fleet driving on the M18, data filtering leads to more than 8000 “fictitious” travels that can be analyzed.

Later, the script extracts data about road pavement conditions. It reads the attribute table and it assigns specific coordinates along the pavement of the considered road to each record. Then it stores the average road pavement conditions (namely a geometric parameter, or a measurement taken directly from the pavement) filtered on the basis of the geographical position in different vectors. It means that looping through both each record contained in “Data.T” and each record of the database containing the road pavement conditions, it calculates the average of road pavement conditions about data contained in the rectangle defined by the starting and ending point of the truck travel (stored in “Data.T”) and it stores the result in different vectors.

Consequently, the script appends each one of the generated vectors to “Data.T” assigning the averaged road conditions that cause specific averaged fuel consumption.

At this point, data can be filtered per different type of vehicle, different average speed, pavement material, geometry and road conditions in general.

Finally, the averages of vehicle fuel consumption related to the road conditions extreme values (from very good to very bad conditions) are compared. Doing so, it would be possible to evaluate which percentage of fuel consumption is influenced on average by road pavement conditions.

Alternatively, another solution could be to compare the average fuel consumption at the beginning of the road lifespan and at its end, but in this case many years of data collection are needed (on average the entire lifespan of a road is 20-years at least). Or another solution might be to compare the average fuel consumption before and after road maintenance. In fact, in this case, we just need to find out the databases containing data collected before and after when the maintenance have been performed.

Well, crew, that’s not all! It seems that we are on the right way for results, but it is clear I need to work more on the script before reaching the objective of the project. I mean, I already have many ideas for improving the analysis and for obtaining some more interesting results. But, as someone said: “It’s a long way to the top if you wanna Rock ‘n’ Roll” (AC/DC, 1975).

Please, e-mail any suggestion about possible developments for the project, possible collaborations, data analysis methods, software, conferences, meetings, seminars or about any other request at:

See you next month for a new post! Stay tuned!

Ah, please, talk about this project with friends and share the link to my blog with them! Thank you! ;)

Cheers,

FP13

Hai…you have posted great article, it really helpful to us.. I will refer this page to my friends; I hope they will like to read - Statistical data analysis Services

ReplyDelete